Ask Me Anything with Arno Candel: The

Future of Automatic Machine Learning

and H2O Driverless AI

This video was recorded on May 5, 2020.

H2O Driverless AI employs the techniques of expert data scientists in an easy to use application that helps scale your data science efforts. Driverless AI empowers data scientists to work on projects faster using automation and state-of-the-art computing power from GPUs to accomplish tasks in minutes that used to take months. With the latest release of Driverless AI now available for download, Arno Candel, Chief Technology Officer at H2O.ai, was live to answer any and all questions.

In this video, we answer live audience questions and share what’s available in Driverless AI today and what’s to come in future releases.

Read the Full Transcript

Patrick Moran:

Okay. Let’s go ahead and get started. Hello and welcome, everyone. Thank you for joining us today for our Ask Me Anything session with Arno Candel on the future of automation machine-learning and H2O Driverless AI. My name is Patrick Moran. I’m on the marketing team here at H2O.ai and I’d love to start off by introducing our speaker. Arno Candel is the chief technology officer at H2O.ai. He is the main committer of H2O-3 and Driverless AI and has been designing and implementing high performance machine-learning algorithms since 2012. Previously, he spent a decade in super computing at ETH and Slack and collaborating with CERN on next generation particle accelerators.

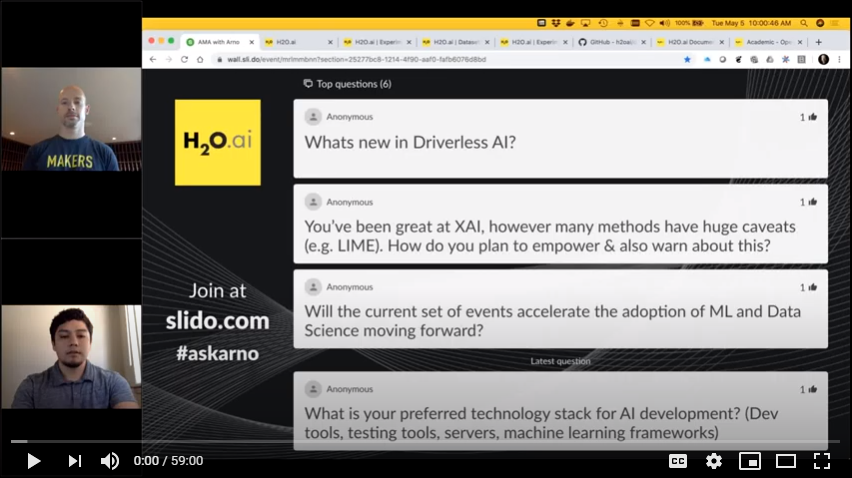

Arno holds a PhD and Masters summa cum laude in physics from ETH Zurich Switzerland. He was named the 2014 big data all-star by Fortune Magazine and featured by ETH Globe in 2015. You can follow him on Twitter @ArnoCandel. Now, before I hand it over to Arno, I’d like to go over the following housekeeping items. We’ll be using Slido.com to display your questions throughout the Ask Me Anything session. Please go to Slido.com and enter the event code AskArno. That’s one word. A-S-K-A-R-N-O. Once again, AskArno. A-S-K-A-R-N-O. We’ll be displaying all of your questions on Slido, and you are encouraged to up-vote the questions you’d like to hear answered first. The most popular questions will rise to the top of the display board, as you can see on the screen right now. Once again, Slido.com. AskArno. This session is being recorded, and we will provide you with a link to the copy of the session recording after the presentation is over. Now, without further ado, I’d like to hand it over to Arno.

Arno Candel:

Thanks, Patrick. Thanks everyone for joining today. I’m very blessed to be speaking for this great team at H2O. We have a tremendous team of people, not just the grand masters that are so popular, but also the software engineers. The entire team is really working together. The sales, the business units, they’re all coming together. The products that we’ve made in the past have been really novel and innovative and Driverless AI is continuing this tradition where it’s something new that you haven’t seen before. And in this demonstration today, if I get to do that, that would be my privilege, but I really would love to answer some of the questions that you have, and also where the times are, of course, it’s challenging these days. What can we do about that? What’s the role of data scientists? What is machine-learning going to do? And so on.

Driverless AI is addressing some of those issues already in its latest incarnations, and I will show you what’s new in Driverless AI to start with and I’ll give a very quick introduction to Driverless AI so that everybody is on the same page. Let me share my slides here. As you know, Driverless AI is a platform for building machine-learning models. That’s the gist of it. But the machine-learning models aren’t just any machine-learning models. They’re actually rich pipelines full of feature engineering, and the feature engineering is the magic sauce inside this platform where it takes the data and changes the shape of it slightly so that the juice can be extracted in a better way. The signal to noise ratio is very high. We can get the best models in the world, and not just for tabular data set, but also for text, or time series, or now images.

They’re all things basically meant to help data scientists to get to production faster. What does it mean, “production”? It means you get a model out at the end that’s usable, that’s good, that’s validated, that’s documented, that has a production pipeline that is ready for production. And the production pipeline has to run in Java; not in Python, necessarily. It has to run maybe in C++ somewhere, and all of this is automatically generated by the platform, and it can train fast on GPUs and so on. So, it’s well designed to be the best friend of a data scientist. In the last two or three years, we’ve been coming up with a lot of features, things that our customer support and all of us, we’ve been looking at this whole thing as like visionaries. We wanted to make sure that the product can do stuff that nobody would think was possible two or three years ago, and now it’s reality. It’s just one more milestone along this roadmap.

We started at the grandmaster recipes, and then it became GPUs, and then it became time series. It became machine-learning interpretability, automation documentation, automation visualization, connectors to all the clouds and all the data sources. It became suddenly deployable on Java and C++ to take the whole pipeline to production as it is with all the feature engineering. This was a miracle, almost. And then we also shipped it to all the clouds on prem anywhere, so it’s really just a software package. We don’t care where it runs. Also, it has Python in our client APIs. Now, last year we introduced the Bring Your Own Recipe, which means you can make your own custom recipes inside of Driverless. You can make your own algorithm, your own data, your own feature engineering, your own scorers, KPIs, and your own data pre-processing, and all of that is Python codes.

You can really customize anything. You can add your own PyTorch-Transformers if you want. You can add anything. You can download, join group by augment. You can make your own marketing campaigns cost scorers. What you can think of Python land, you can do in Driverless. That’s the rule. And that’s pretty amazing. I would like to say that in the next generation of the version of 2.0, which will also be long-term support just like 1.8 is right now, we’ll have another milestone which is multi-note, multi-tenancy, multi-user, model import/export and all that. Once you have the models in the model deployment platform, you can manage them. You can say, “Hey, is this model than the previous model?” A/B testing or challenger model, models that are drifting, automatically re-trained or alert-raised or different kinds of alerts. You can even do custom recipes for those alerts.

We want to empower the data scientists to infiltrate the whole organization. Back to data improvements. We’ll tell the data scientists through Driverless AI what to improve in the data, and then once they know what to improve in the data, they get better data from the downstream engineering, from the upstream data engineering, and then can make insights with Driverless, and then pass on those insights downstream to the business units that consume those applications. We want to make sure that the data scientists are not just given a data set and then they play with it, but they actually can impact the business outcome by going both to the business side, but also to the data engineering side to ask for improved data. Also, the next generation platform will have a custom prototype, so it will have image segmentation, for example. Multi-task, multi-target learning. Whatever you want to do, you can do in the Driverless platform. That’s our vision for the future.

This is just a short list of the new features we introduced in our current generally available offering of 1.8, which is the custom recipes, the project workspaces, and then a bunch of smaller, simpler models, and then also more diagnostics, like more validation scores and so on. I’ll show some of those things later, but as you can imagine we connect to pretty much any data source in the cloud, on-prem. Hadoop, Oracle databases, Snowflake, you mention it. You name it, we have it. Custom data recipes allow you to quickly prototype your pipelines, so you can make up new features or compute aggregation sets on convert transactional to IAD data, whatever you want to do as a recipe, as a Python script. You can look at the data quickly. In one click you get the visualization of the relevant information that matters. The feature engineering is our strength, if you want. We make features that are very difficult to engineer and hard to come up with if you’re just starting out, let’s say, and it can have a lot of information in there such as interactions between numeric and categorical columns, and so on.

And then there is an evolution of the pipeline where not just the features are selected and the models are selected, but all the parameters are tuned off the feature engineering and of the pipeline parts that are models. We have millions and millions of parameters that are being tuned and refined, and in the end the strongest will survive and that will be the final production pipeline. We will make sure that the numbers that we deport are valid on the future data sets if they’re from the same distribution. So, hold out scores, validation scores that are trustworthy. And of course there are a lot of statistics going on deep learning. Not only deep learning; also gradient boosting. All kinds of techniques to use a dimensionality, to do aggregations of certain statistics across groups. For example, for every city, every zip code, we get some mean number, but only out of fold, so it’s all a fair estimate of the behavior of populations and so on, and all that is done automatically for you.

If you’re an expert, you can control it all with these expert settings. There’s several pages of these where you can control everything. For time series, there is, of course, a causality that you have to be worried about. You can’t know about COVID until it’s happened; have the knowledge about COVID in the last November or so. This is exactly why these validations splits are so important, especially for time series. But sometimes you also have to do validation splits for non-time series problems that are specific, like with a fold column, only split by city or something, and then we shuffle the data. Driverless can help with that as well. For the natural language processing, there’s many different approaches. With the custom recipes you can do anything you want, but in 1.8 you have TensorFlow convolutional to the current models, in addition to the linear models and the text features that are statistical, but in the version 1.9 you’ll have BERT models, PyTorch BERT models, which can improve the accuracy even further.

Machine-learning interpretability has been a key strength of Driverless AI for the last few years, and we have further enriched it with disparate impact analysis where you can see how fair a model is across different pieces of the population and different metrics so you can see, is it singling out somebody or some specific feature of the data set. It doesn’t have to be just people. It can also be just in general. Is your model somehow biased? You want to know that. And then there is a stress testing capability where it’s like a “What if?” analysis, a sensitivity analysis, where you can, say, if everybody was a little bit older in this zip code, what would happen? Or globally. Or if everybody had more money, what would I have done? It’s basically an exploration in the meaning of the model’s behavior. There has been a new project workspace where you can compare models that are organized by you, so you can say, “Here’s the data set and here are my models for this data set,” and then you can sort them by, let’s say, validation score, or by the time to train them, and so on. And you can then look at them visually and see the models, and so on. There’s a way to quickly compartmentalize your experiment.

This whole block will then later be exportable in 1.9 so you can share this whole experiment project page with other people. You can share entire experiments based on their project belonging. In 1.8, we also added a scores tab, which means in the GUI you can list statistics about the different models. For example, the leaderboard, you can see that the BERT model is still better than statistical models or better than TensorFlow models in a glimpse, basically. You quickly see a leaderboard, [inaudible] feature revolution from the model tuning part where you can quickly see what’s happening right now. You can also see the final pipeline, how is it behaving, which model is contributing how much to the final pipeline, how many cross-validation folds did it have, and if it had cross-validation folds, then what was the score in each of these folds? The out of fold predictions. How did they do on each fold? Is it stable across folds or is there some noise in the data? And it helps you to see all the metrics.

As I mentioned earlier, we have Python and R clients where you can remote control Driverless, so if you don’t like the GUI you can do everything from the command line or from the programming APIs, and everything is automatically generated, so you will always have the latest version of all these APIs for every version that we share. And then when the model is done you can score the models in either Java or R or Python or C++, which is what R and Python are using underneath, or you can, of course, call the GUI and say, “Please score me this data set.” The GUI can always score using the Python version. There’s two different Python versions. One is using the C++ low latency scoring path, and one is using the full pickled state that was trained during training time. So, there’s a bunch of ways to score and it’s quite fascinating to see how people are using those in production.

For example, you can train a model with GPUs, XGBoost, and TensorFlow. Then you get a Java representation of the whole pipeline. You stick that into Spark and you score on terabytes in parallel at once, and you have no loss of accuracy. This is what such a pipeline looks like, so you can see all the pieces in the pipeline from the left side. Your original features coming in, getting transformed into daytime features or LACs, and then aggregations between the LACs, and then back into Light GBM to make a prediction. A decision tree, for example, would be visualized like this so you can actually look at the split decisions and see what the outcome is for each case. So, there’s a lot of transparency in our pipelines. Everything is stored as a protobuf, so it’s a binary representation of these states and you can inspect them, and you see what you get.

This is absolutely the full pipeline that we are visualizing, which is being productionized in the end. So, no shortcuts. Every piece that we ship out of the box has a deployment version of it for Java and C++. And if it’s not yet in Java, then it will be soon because it can call C++. TensorFlow and PyTorch, for example, they work in C++, but once you have Java, you can make a J and I column. We’re working on giving that to you out of the box as well. So, the rounding of all these extra cool AI features in the production pipeline is happening right now. This is how it looks like in Python when you load this protobuf state and you say, “Score” you immediately get the response in Python, and there is no dependency on any PIP installs of TensorFlow or whatever. It’s all built into that protobuf state, and it’s serialized into a blob of bytes, so you don’t need to worry about any dependencies.

If you don’t like the MOJO size, sometimes they can be three gigabytes or bigger for a normal 100 megabyte dataset because this is a grandmaster level Kaggle winning pipeline. In this case, it’s 10th place out of the 2,000 or so in Kaggle. This is out-of-the box with one push of a button, right? But sometimes you say, “I don’t want 15 models. I don’t want 700 features. It’s too complicated.” You have a button there, new, that says, “Reduce the size of the MOJO,” and you click it. The whole thing becomes 16 megabytes, and it’s faster to train, faster to score. The accuracy is still pretty good. So, maybe set with that, see how it behaves.

But, as I mentioned, there is just some presets that we apply. You can also change those presets and make your own custom size of the overall pipeline. You can always reduce it to not be an ensemble or not have more than so many features or not have high order interactions and so on in the feature engineering. So, everything is basically controllable by you. Once you’re done with the whole modeling, you can deploy it to Amazon or to the REST server it’s built in or all these other places. You can go with Java and C++ that I mentioned before. These are just two demo things that we built into the product. And then the open source recipes, the custom recipes, you can do whatever you can do in Python. That’s very powerful, right?

Anything that you can imagine. Your own targeting coding, your own voice-to-speech, voice-to-text, to featurization your own scorers based on some quantile of the population, or your own scorer that’s fair across different adversely impacted populations or your own data preparation where you take three data sets and join them and split them exactly the right way, and then you deturn all five at once in one script. All this is doable today. And you can make your custom models. You can bring CatBoost, for example. You can bring MXNet. You write your own algo, you bring it in. That’s how easy it is. We have over 140. Now, it’s, I think, 150 open recipes that you can see how it’s done and you can customize them or do whatever you want. It stays URIP and you can have your insights be part of the platform.

This is just how it looks like. You can bring all the H2O open source models, for example, into the platform. There’s more. There’s ExtraTrees from scikit-learn and so on. In the end, you can just compare them, and you see that BERT has a lower log loss. In this case, 0.38 versus the built-in ones get only to 0.5 if you enable TensorFlow. If you don’t enable TensorFlow, then you only get a 0.6 log loss. You can see it almost is quite a milestone here that you can get to that lower loss for the sentiment analysis. This is a bunch of Tweets and you want to predict the sentiment. It’s a three-class problem, so positive, or negative or neutral.

You can imagine that having the knowledge of the language, like the BERT model does, it means a lot, right? If you understand what someone is saying, by looking both forward and backward in the sentence using these smart neural nets, then of course it’s better than just counting the number of “ands” and “ors” and “hellos” and so on in a sentence. That’s what it is. The same is true for time series. If you have a Facebook prophet model or ARIMA model that you like, you can bring it in as a recipe and then you’ll have a different score than what we have out of the box, and sometimes it’s better, sometimes it’s worse, but the interpretability might suffer a little bit because you only have your black box that now makes predictions, and that’s all you will see in the listing of the feature importances.

But as in our case, you would have at least still this lag of this feature matter this much to our model. Right? Once you make your black box models, you need to think about how you can give them the variable importance back, and if you can do that then we will show your variable importance. If you make your own random forest, then we will show that, of course. The point here is that whatever you do, you need to still be in charge of it, and that’s why we try to do as much as we can out of the box, but sometimes, like I said, there is a trade-off between interpretability and prediction of accuracy, and you can see it here in the case of prophet. In the end, it’s not better, but it just is one black box Oracle model versus a bunch of lags going into XGBoost, for example.

That’s the last thing that I want to mention from the introduction slide section, that’s the automatic documentation. You get a 20 or 30 page word document for every experiment filled with distribution plots and leader boards and parameters that were used, and it shows you a lot about what’s going on. I will say this is very useful, especially for regulated industries or people who want to keep track of what happened, and then we can dive into the sneak preview into what’s coming next, but I’ll keep that for a minute and I’ll address maybe one question in the meantime. Let me look at the Slido page.

Yes. “What’s the value of data scientists and machine-learning specialists these days?” I would say even more than ever. Imagine there was a time when there were no computers. Everybody said, “Oh, wow. These robots are going to take over my job. Are they going to replace people who type in stuff or who make decisions?” Yeah, of course they will, but you will still be working with them. Everybody now has a laptop or an iPhone in their face all day long, so you’re going to work with those computers, and if you don’t, someone else will. There is definitely a need to do that in our competitive world, and you will benefit from these tools, or platforms, whatever you call them, or you won’t. If you’re a good data scientists in 2030 and you don’t use these tools, then you better have something else up your sleeve.

There is no way that people that want to be competitive don’t use these tools. That’s another way of seeing it. They will add value in many places. As I mentioned, you want to be involved with the data preparation step. For example, in Driverless, when you have an experiment, in the next version will tell you that in Minnesota there wasn’t enough information to make conclusive predictions, so get better data for Minnesota. That’s all possible by the model itself, to figure out where it’s strong and where it’s weak. That can be something you can bring back to your data engineering team and say, “Why don’t we have good data in Minnesota?” Or you can bring it to your management team in the business side and say, “That’s the reason we don’t make money.” And then they will say, “Well, maybe we shouldn’t do business there,” or something.

These are all ideas, roughly speaking, where you as a data scientist can be more involved in the overall business, and you want to be entrenched as a business strategist. You don’t want to just be given a dataset and then you play with it for five weeks and then you say, “Oh, well I have a good AUC.” These days are over, I would say. It’s more important to know what it means and to know what it exactly does right now, and if that assumption is no longer true, then you should change the overall pipeline and the place where it fits. Tools like ours will help you figure out what piece of the signal is actually strong and what piece of the signal is weak and what’s driving what.

We’re getting more into the causal place, let’s say. No longer just, “Oh, yeah. I have so many AUC.” AUC alone or whatever it is, is not a good number. It’s one number and it’s just, “Okay, fine, I can sort it, but then why, and what, and what happens if this doesn’t hold? What happens if everybody gets different, the distribution changes? How robust is my model?” We will do the best we can to make all the models we built tailored to be as robust as possible, for example. You will have a knob that tells you the robustness; how much robustness price are you willing to pay. And then whether it’ll be simulations that show you what is the trade-off.

The next question is, “What is my preferred stack?” I use PyCharm a lot for Python development and we use just Linux servers. I use VI plugins in PyCharm and I have usually multiple servers with GPUs in them, and some without GPUs, and a lot of RAM. They all have NVMEs, solid state drives. They all have either I9 or Xeon chips. I will say a lot of memory is nice to have, but sometimes it’s actually better to have not so much, so 64 GIG is our low end memory version, so to speak. To make everything work with less memory is also a good thing. You want to be efficient. I would say get two GPUs at least in your dev boxes. That way you can paralyze a lot of the model building, not just TensorFlow and PyTorch models. Also XGBoost and so on. Light GBMs. If you can train two models of these in parallel, then per time unit you get roughly five to 10 extra throughput than if you had one CPO only. So, we benefit from GPUs.

And NVME drives, these are solid state drives, they are three gigabytes a second and they only cost you a few hundred bucks. Definitely a good investment because you can read and write files super fast, especially since we’re using data table, which is the fastest CSV reader and writer. You can write 50 GIGs to a CSV in less than a minute. Yeah. I think that was a minute to do the join and the write, and the read actually, from CSV back to CSV. So, the whole thing was done in one minute for 50 GIGs, and that’s all because it’s limited per the IO of the disk, so you want solid state drives.

Okay. What is the next question? Oh, yeah. Perfect. Exactly. That’s a good one. There is sometimes problems where you have the same person show up multiple times. I remember the all state distracted driver challenge in Kaggle. They had a bunch of rows, but every row belonged to a person, but the person showed up several times in the same data set. You would have one person being photographed holding the cell phone like this and then maybe looking out the window, looking back in the car, looking down, holding the radio knob, and so on, and you had to tell what are they doing; which of the 10 things are the drivers doing that distract the drivers, or maybe they were driving normally. If you just fit a model in all these images, then of course it will remember that the person with the blue shirt is the one that is maybe distracted or something. It’s not actually learning that it’s the arm that matters, but it’s the shirt color that matters because you already saw that somewhere else, and so on.

So, you don’t want to, at the same event, photograph multiple times. Like, me holding my cell phone five different photos. And if I see three of them in training, I can remember, “Oh, if there’s a white arm here, then it means he’s holding the phone,” but it shouldn’t have been remembering my arm color. It should’ve been remembering the arm posture or something. So, it’s very important not to have the other two pictures to be in the validation set, but to be either all in training or all in validation. For that, you would use the fold column. When you get these cardinality limits, that usually will tell you that either it’s something that’s approaching to be an ID column. That can happen if you have too many uniques. It doesn’t really tell you that much anymore because every row is different, so what’s the point?

Now, if it’s a categorical, that could be a problem. Then it’s just an ID. If it’s a numeric column, like your number of molecules in your blood right now, everybody of us here will have a different number, right? And then it’s okay to be different. So, it’s kind of tricky to handle all these cases, but we’re trying to do our best job with tech types and the data science types. One type is the data type stored in the file. Another type is the type of the data; how it should be used when you’re actually going into modeling. Certain cardinality thresholds can be used to decide whether or not a feature is numeric or categorical. If it’s too many uniques and it’s a number, then it shouldn’t be treated as a categorical anymore. Then it should be just a number, as I mentioned.

But I will say the fold column is something that’s often overlooked. You can also make your own fold column. That is the column that tells you, “For this value, you’re either left or right. Training or validation.” If that fold column is, for example, the month of the year, then you can say, “I want to train on January, February, March, and April.” But then it will also train on April, March, and January, and predict on February. If you can do that, well, maybe that is a good thing depending on your data. That just means your model is more robust over time and doesn’t really need to know everything about the past to predict the future. Maybe it’s okay to do that sometimes, but you have to be an expert. That’s why the fold column is a user-defined choice, which column should I use for that.

Okay. Let’s go to the next question. This is about explainable AI and how we can avoid having problems with it and how do we empower it. I would say that’s a good point to go back to the slides. The sneak preview into 1.9, which is what we’re working on right now, the team has been relentless at building new products in the last few months, not just COVID modeling as you might’ve seen in the news or in our blogs, but also in making multi-node work, collaboration between people work, model management, deployment, monitoring work. This is called ModelOps. Then MLI has gotten a full, fresh face, and also custom recipes. I’ll talk about that some more later. Then the leaderboard project that I mentioned earlier with the press of one button you’ll actually get a full leaderboard of relevant models.

In the future, in 1.9, if you have a dataset you can not just experiment, but start a leaderboard, and that will make you 10 or more models at once. Some models are simple models. Some models are complicated. Some have complicated features. Some are easy features. This trade-off between the feature engineering and the modeling, that goes right into this explainability, right? I can make a one feature model that’s very good because the feature is itself a deep learning model using BERT and embeddings. And then you can say, “Oh, wow. I have a GLM model with one core efficient and I get the sentiment right every time.” Well, yeah, because the model got the transformed data output, which is the feature engineering pipeline. It’s not the model that mattered. It was the pipeline that mattered. We are free to change the data along the way before it goes to GLM, right? That’s called feature engineering.

One such feature could be the BERT embedding extractor, and once you do the BERT embedding extractor, suddenly your feature is much more useful than the raw text, and then suddenly your one core efficient GLM is amazing. When you say you want an explainable model, does is mean you want a GLM or does it mean you want no more than 10 kilobytes for your overall pipeline, or does it mean you want to know what’s in the pipeline? These are all good questions. Another question could be maybe you don’t really have to explain the model. You just have to show that it’s robust. Maybe in this day and age it’s futile to try to explain a BERT model to every last number because it contains hundreds of megabytes of state and you’re never going to explain it, so might as well just look at how strong it is in terms of stability.

What happens if I do adversarial attacks and robustness testing and show you that my model is super stable? Well, you’d be happy with that as an explainable replacement, if you want. Is it just good enough to model debugging and show you that it’s super robust? No matter what you attack it with, it’s always giving you the best answer. And maybe it does need this extra complexity to be that good, right? If you have a simple model, maybe it’s not that good at being strong under adversarial impact. If I attack you with a slightly modified data, maybe the simple model will falter, but maybe my super complicated black box model will not. Maybe you’re actually better off getting that model instead of the simple one.

It’s a very fast, evolving space. The explainability is one aspect, but the definition of what is explainable is also not easy, right? Not everybody will say, “Oh, I just need to understand what happens.” Well then you can study model X and then you know what happens. Is that enough? “No, no. It has to be a little bit simpler. It has to be explainable.” Well, does it need to have one explanation or can it have a million different explanations for a same outcome? Because often you have a lot of local minima that are all the same in terms of outcome, but they’re all different. Each one is different. You can say, “Oh, this is what happened,” and then the next guy will say, “Oh, this is what happened,” and it’s the same number that comes out and neither one is right because it’s just one of many possible explanations for the same outcome, and then it becomes useless, right?

Our goal is find something that’s unique and still simple, and if that’s not possible then it’s probably better to be complex, but then actually robust. There might be a phase shift transition to a, “Show me that the model is stable” versus “Show me that the model is simple.” The next thing I would like to mention is the prediction intervals and, in general, residual analysis. We’ll have another slide later, but imagine you knew what the model did. Okay. Fine. We have a model that predicts. Now, imagine you knew where the model was weak. Every time you make a prediction for someone in Minnesota, it’s bad. Much bigger error, as measured in the hold out predictions. Well then, you can try to say, “Hey, I need better data for Minnesota,” or look into what’s going on, right? That’s what I mentioned earlier.

Maybe you can make a model that’s better for those. Maybe you can use that as a metric to guide your optimization process. Do not have any subgroup that’s worse than the other subgroup by this much. Make them all the same. And then your overall leaderboard that automatically gets filled could be scored by this robustness metric, and then you will have that as the guiding post for you and not just the accuracy, for example. It will be accuracy divided by some other number that is based on the fairness or the stability, and that could be something very useful, and that’s all doable today because the scorers, as you can see in the very bottom, the metrics that compute the number, they’re not just looking at actual predicted, like cat/dog/mouse and then the three probabilities and then you say, “What’s my log loss?”

No. In the new version you can actually pass the whole dataset. You can say, “Oh, I’m from Minnesota and I have this and this condition, and hence, I’m going to know exactly what I’m going to do with my scorer to look at the fairness.” I can look at the subpopulation and so on and convey it differently, and I could, in theory, come up with a scorer that looks at the wholistic view and makes it fair. So, there’s a lot of ideas, and we’re adding these recipes in piece by piece. Other recipes to be added were the zero inflated models where you have a lot of zeroes for insurances. They don’t pay you most of the time. Your claim amount is zero. But once you have a car accident, it’s $6,000. So, the distribution starts at zero. Most people have a peak there. Everybody has a zero. And then some people have some distribution there where they have their claim, right?

Now, the question is how do you make a model that predicts all these zeroes and then all these other bumps at the same time? That is a custom recipe that does better than any other model before because it builds two models at once. One is saying, “Classify me whether I am bigger than zero or not, and once I’m bigger than zero, then how big is my loss?” And so, I can have two models. One classified and one digresser work together, and then they will make a better predictive model overall. And that’s just one of many custom recipes that we are shipping out of the box now, and it’s automatically detecting the case where it needs to be applied.

Then the BERT models, the image model, as I mentioned those are not very explainable, but they’re really good, right? Maybe that’s a not-bad thing. And then we also work on the epidemic models, and I’ll show you that in a second. But as you can imagine, there’s a lot of effort going into this explainability because everybody wants to know what’s happening. At the same time, everybody wants highest accuracy. Usually, some of the people want both. Some want some only, but there’s a trend towards, “Give me the best and explain it,” and that’s, of course, not easy. We have even more control over feature engineering. So, how do you train models in the absence of previous data? If you don’t have data, how do you create data model? In the case of COVID right now, right?

What would you do if you had no COVID in the past? Well, you would look at other things. You would look at maybe uncorrelated data that’s not the same old, same old. You would find maybe what are people saying in social media, what are people doing, how are they moving? Mobility maybe. Suddenly, you want to know if a town is being inundated by people from New York that are running away from New York going to Minnesota, let’s say. Suddenly, there’s all these people that now want their special shampoo that they always bought in New York and you suddenly don’t have it in Minnesota, and then every store runs out of that shampoo.

If you knew that, you could probably deliver that same kind of supply to Minnesota and then sell more of your goods, right? This whole supply chain optimization based on where people are moving. Suddenly, now you need to look at the data that says who’s moving where, where in the past that was not relevant because you were in the same place all the time. So, think outside the box, basically, and see which data sources can be used to augment your experiences. And then I know that you still have no labels, so yes, it’s a problem, but at least you have … Even in two weeks of data, let’s say, you can still get some early signals, right? You can see, okay, we are sensing the demand, so we are building demand-sensing models with our grandmasters to foresee from these early green [inaudible] where people are saying, “It’s okay. We’re getting out of this.” Suddenly, the demand will rise again. Can we smell that somehow?

You would not just look at the sales numbers over the last 12 months and 18 months and 24 months. Yes, that’s right. You would have to look at new data, short-term data, social media, behavioral data. Maybe you need more of those satellite imaging data where people are looking at which trucks are on the road where, how much oil do they have, who’s driving where, which parking lots are full or not, all this kind of modern approach that the hedge funds are taking with data science. That is going to be more important. So, you need to pay somebody to get that data and then to build models in the last two weeks and see if there’s any signal. How do you know there’s signal? Well, you need tools like Driverless to tell you which datasets are useful.

It’s a good challenge, but it only shows that data scientists are more important than ever. You don’t want to be just sifting some dataset that you were given six months ago. This is much more interesting to actually deal with the reality of the world. Let me go back to what’s new real quick to make sure that we covered that. You can export models in 1.9. You can export the model. Once you export it, it shows up in this auto site here, the ModelOps, and then you have a model where it shows you when it was trained and then where is it going to production. You can actually set here, deploy to production or to dev, and then you can look at the model as it scores and you can basically give an endpoint. You can query it. You can look at the distribution of incoming data, distribution of predictions going out, distribution of latency, distribution maybe of whatever you want.

You can make your own custom recipes here, and this is all Pythonic, so you’ll be able to have alerts. Like, “If this happens fall back to model number two. If this and this happens, fall back to GLM. If this and this happens, call me no matter what time,” and so on. All these things are what people are interested in. But another important aspect of this sharing here that I mentioned is that if you export it, someone else can import it, and you can tell who you want to be able to import it. I can make a model for my entire group and then everybody can get it, so we are no longer stuck on once instance, but we are able to share driverless models to everybody else in the organization if choose to. It’s a more fine-grained control of who does what.

And then the ModelOps storage place here where all the models are stored, that’s kind of the central place where these models will sit. Then the MLI UI, as I mentioned, got improved, so you’ll have more of these realtime popups. Every time something is done you can look at it. You don’t have to wait until it’s all done. You also can do custom recipes, so you can build your own MLI pieces where you can say, for example, “I want to build this and this Python recipe. Run me these models and then spit out these files.” And then you can grab those files out of the GUI, basically. We are still figuring out the best way for you to completely customize it all, but there is basically a preconditioned or post-conditioned, if you want. You give us something, and we give you something, and that’s the contract of that recipe, so you can, in the end, customize a lot of the stuff to your needs.

This is the automatic project, as I mentioned. When you push that create leaderboard button, you get all these models at once. And as you can see, two of them are completed, two are still running right now, and the rest is in the queue. There’s going to be a queueing system, a scheduling system that will make sure that the machine isn’t overloaded when you submit 12 jobs at once. And if you had a multi-node installation of Driverless, then you will see more than two models running at the same time. You will run eight at the same time on a four node system. Basically, you get more throughput and you get control by not having them all run at once and run out memory, as is the case today.

Today, you would have to be more careful. Later, you can just submit it and then come back when it’s done. This is the residual analysis I mentioned. Also in 1.9, you can have these prediction intervals enabled and it will look at the distribution of the errors on holdout predictions, and then based on those errors it will say, “You’re in Minnesota. Error is pretty high. Here is your error band,” given the 90% confidence band. So, it does a heuristical way. It’s not guaranteed. I can never say, “This will be what happens tomorrow,” but I can only say, “Given what I saw in your model performance on your holdout data, these are the empirical prediction intervals, 90% of points had this error or less.”

You can look at the residuals or the absolute residuals of your predictions and you can say, “The quantiles of this error are so that this is where we end up in most cases.” And if you say that, only 60%, then you will get different bands, so you can control that. These are the BERT models. Also in 1.9, out of the box, you see at the bottom-left here all the different types of BERT models. Not just BERT, but DistilBERT, XLNet, Roberta, CamemBERT, or CamemBERT as we say in French, I guess. XLM, Roberta, all these different models are state-of-the-art models that are absolutely fantastic and they really shine. As you could see earlier, I showed you these numbers here from the validation set. Also in the test set, they translate. So, 40 log loss instead of 60 is a huge improvement of 50 here.

BERT is the best machine-learning model for NLP and will be in there out of the box. Best MOJO support even, so you can productionize it. The same for computer vision. You can upload a ZIP file with a bunch of folders. With images, JPGs, PNGs, whatever. You just pull it in, it will automatically make a dataset out of it. It will automatically assign labels to those folders and say, “Okay. These are all the mouse, these are all the cat, all the dog pictures,” and then you’ll have an image recognition problem out of the box solved with these pre-trained models that also have fine-tuning now so you can load these state-of-the-art image models and have fine-tuning.

The thing I mentioned earlier was that there will even be a custom problem type, so not only will you do classification regression, but you can do segmentation or SEIR labeling or whatever you want to do. As long as you can define what the model does and what the score is, then you can do anything. So, will not just have one column of predictions, but a column could be a whole mask of segmentation shapes. Like, “The human is here. The car is here. The house is here. The pedestrian, bicycle is here.” And all of that could be pixel-based maps that are part of the prediction of the model. And then your score is based on all of that, and the reality, and then you get some smart overlap, and so on. It’s more complicated models will work as well. We’re going to the grandmaster style AI platform vision and not just classification regression.

It’s a challenge to do it all in one platform, but I think if anybody can do it, then our custom recipe architecture school be able to do it, so that’s our goal for this year. This is another hierarchal feature engineering thing, before I get back to questions. We’ll be able to control the levels of feature engineering as you want. You can say at the beginning only do target and coding, and then do whatever else you want to do with feature engineering. So, you can control the first layer. That could be a bunch of transformers or it could be just one. You can say, “I want to just do the dates trans-coding,” where the dates get mapped into day of the year, day of the week, and so on, and then treat those as regular numeric features or categorical features.

You can do embedding with BERT into vector space and then you run our high feature engineering pipeline. Okay. This was the custom MLI. You’ve seen the same screen before, but you can do any explainers that you want with visualizations. This was the epidemic model. That’s the last slide. We predicted reasonably well. Some of our teams were in the second … Actually, still are in the first or second or third or fourth place in Kaggle on predicting the COVID spread, and the peak, and how long it will take, and so on, all using models. Some of them are Driverless. Some of them are outside, even simpler models, but this is in collaboration with actual hospitals. This is a SEIR model where we modeled the evolution of the disease, how it spreads through different partial things that … People who are sick infect others who are not sick, right? People who are sick get better over time. People who recover, they don’t affect anybody and so on.

Each of these equations down here reflect one of those realities, and then you integrate those equations up from the starting point where only a few people are sick, and if you tell us what the total population is, then we’ll tell you how it evolves, roughly speaking, if you also tell us what the rate of exposure is, how quickly it spreads, and so on. There’s a bunch of features that you have to basically set for this model. Free parameters, as we call it. If you set those free parameters right, then the overall evolution will be very meaningful. If not, then it’s junk. This is an epidemiologist’s dream to set these parameters and then see what happens. The model that we fit is getting this fit of this epidemic model, but then it subtracts that from the signal, and what’s left is the residuals, and that’s being fitted by the rest of the Driverless pipeline.

So, you’ll get both the actual boost of the rural, that’s either global behavior, and then you get the per-country evolution of this epidemic, and together you get one combined model all out of the box. This is also in 1.9. So, that’s it. Now, let’s get back to questions before I point you to the docs. Yes, automl. It’s not just parameter tuning or just feature selection, but it’s actually helping with the validation scheme. That’s a big one for me. It’s, “Given this dataset, how do I make a model that’s good on tomorrow’s data? Should I split randomly? Should I split by month? Should I split by city? Should I split by time? What should I do?” If you don’t do that right, then the rest almost doesn’t matter because if you just randomly shuffle and you know suddenly about these people that you shouldn’t know, as in the example earlier, or you know about the future that you shouldn’t know, and then you’re asking yourself, “Did I do well in the past,” and all these weird things, by random shuffling you can hurt yourself.

It doesn’t matter how well you tune the model after you random shuffle a time series. It’s just not going to work. What you have to do is you really, really have to understand your validation scheme, and only if that’s done right, only then can you actually start digging deeper into the tuning. That’s what the Kaggle grandmasters do. They spend one month on the validation scheme and then the remaining month on the tuning. So, automl should do that validation scheme step for you. And that’s our biggest challenge right now. We have 14 grandmasters, and I think four out of the top six in the world, and some of them are now thinking about how to automate these validation steps. Okay.

“What are arguments, pro and cons, for Driverless?” Well, the pro definitely is it can’t hurt, right? Unless you don’t have the money. Well, then you have to be an academic user and it’s still free. If you are a commercial user, you have a 21 day trial. That’s the current business model. At that point, you have to decide whether you want to keep using it or not. But I would say I haven’t seen a single person who has seen Driverless who then said, “I don’t really need it.” You always want to use something like that to have a baseline.” Within seconds, it makes you the best baseline that you can come up with, given that dataset. I believe it’s the best automatic machine-learning platform out there, I believe. The only con is that maybe you don’t learn as much about it if you let the machine do it, so you should still learn about data science. You should still look at the results of Driverless and say, “Well, why did it to cluster and targeted coding? What does it mean? What is XGBoost? What is Light GBM? What is TensorFlow?”

I’m not saying stop playing around with those tools, but don’t waste your time writing your own for-loops to tune something. That’s definitely not a good time invested, unless you are just learning still. “How long did it take you to release Driverless?” Well, the first slide was done in January or so, or December, around 2017, New Years, let’s say. The vision slide, actually, our CEO made, and all the pieces of that vision slide are now filled up. That started with custom recipes. “Bring your own ideas.” And I was like, “Whoa, that’s difficult.” And then he’s like, “Oh, to TensorFlow, to XGBoost, to open source, to everything.” I was like, “Okay. Okay. That’s also difficult, but let’s see.” And then try to connect it and have explainability in there and have accuracy in there and have these trade-offs, all these knobs. Make it a GUI. Make it also Python, productionized in Java. The whole pipeline.

I was like, “Oh, no. That’s difficult. How do you do that? How do you write Python code, custom recipes, and then have a Python code automatically get translated through Java for production?” How do you do that? It’s not easy, right? Everything takes effort. And then how do you run this in a Kubernetes environment on the virtual clouds or private clouds or whatever? All of this has to work. And we got there. I’m pretty proud of this. Unsupervised models, yes, we are working on those. Some of those are already unsupervised, as you saw in the visualization, but I would say that the real key is to say, “What’s in my data?” There are supervised approaches and unsupervised approaches, and some of the unsupervised approaches that we’re taking are in the pipeline of the model itself. Right?

Some of it, for example, could be that you have to do clustering to figure out what are the right distances between clusters, and that’s a good feature. But we don’t currently say, “Give us a dataset and I’ll tell you what are the five clusters.” That recipe doesn’t exist yet, but that could be one of the custom recipes in the future where you can bring any problem type. Yes, that is this one. Driverless is not currently doing a very good job at unsupervised, but we have ideas. Basically, you need to give us more data and let us tell you what is good about that new data. There’s no better tool than Driverless to quickly churn through all the different possibilities of feature engineering and telling which ones are good or not, and definitely will not replace data scientists. If anything, it will make it more that you want to be in these days.

Workflows. I’m not quite sure what you mean by, “When will Workflows be supported?” You can script everything in Python today. You can manage [inaudible] notebook that upload 12 datasets or make a custom recipe that does the upload that one time, but then it does 12 splits and all that. So, it’s [inaudible]. It’s definitely not a problem. Also, from R. And then we have an even bigger with the MOJO; the production pipeline. We cut off the models, we take the pipeline with the feature engineering and we stick it into the Spark environment, and in Spark you will have scoring going on that on the terabyte dataset, you convert the data with this feature engineering, and then the terabyte data will then suddenly be trained on a Sparkling Water cluster, on a GBM model or something, so you can combine the power of Driverless with a terabyte Sparkling Water installation in Spark, so everything will be scalable in big data. So, you can do anything in that land.

Patrick Moran:

Arno, I think we have time for one more question.

Arno Candel:

Yes. Yes, you can export MLI graphs as images. That’s right. You do have that. I think we’re working more and more on that. All of our pictures right now you can download, but I agree it’s not yet perfect, so we are working on more of this adding benefit to you. Also, we have more inside tabs that show up as insides, and some of those are VegaLite plots, so these are very portable plots that you can port in Word, port any language, any other visualization platform. So, we’re trying our best to make it consumable by you. Thank you very much for your attention today and I hope you can benefit from Driverless AI in this day and age. Thanks for your attention. Take care. Bye-bye.

Patrick Moran:

Yes. Thank you, everybody. I just want to remind you that we will provide you with a link of the recording in about a day or so. We want to wish everybody a great rest of your day, and thank you, Arno, for the great AMA session.

Arno Candel:

Thanks. Bye-bye.

Patrick Moran:

Bye.